Home Blog

Synthetic Chains Made with Dyneema® Help Ensure Diver Safety

As deadlines get tighter and ever-larger structures are expected to be installed, repaired or removed, underwater rigging providers are seeking ways to increase their...

Just another day at the office

Saturation Diving. A good day at the office.Saturation divers not only have the dangerous job of dive for long periods of time under hazardous...

New vs Used Commercial Diving Helmets

We recently published a survey on our Facebook page and received a response from over 100 commercial divers.

The results were closer than we had...

DIVER STORY: Experienced

Dominic wrote in and shared his amazing uncle's story about how he got started as a commercial diver. Sadly, his uncle Brian passes away...

DIVER STORY: That was just wrong….

Story submitted by community member Jake Heckman. Thanks, Jake!

Post Katrina and Rita work for Chevron on the Mighty Uncle John, was grueling at times....

Making Beer Safe for the Ocean – Edible Beer 6-pack Rings

Earlier this year, Saltwater Brewing and WeBelievers came up with the idea and created the world's first edible six pack rings to carry beers.

The...

Commercial Diving Tribute Video

Cool video posted by YouTube user Emanuele Mulargia on YouTube earlier this month. Some of the footage is from other videos you may have...

SubSea Global Solutions Acquires All-Sea Underwater Solutions

Initial Press Release

Combined entity will be a global leader in providing underwater ship repair, maintenance and marine construction solutions

SGS was actively seeking a partner...

Trident 4x Thruster exchange Walvisbaai Nambia

Check out this video of a thruster exchange sent in by Bow Van Der Weijde

https://www.youtube.com/watch?v=6CBPBbueMvs

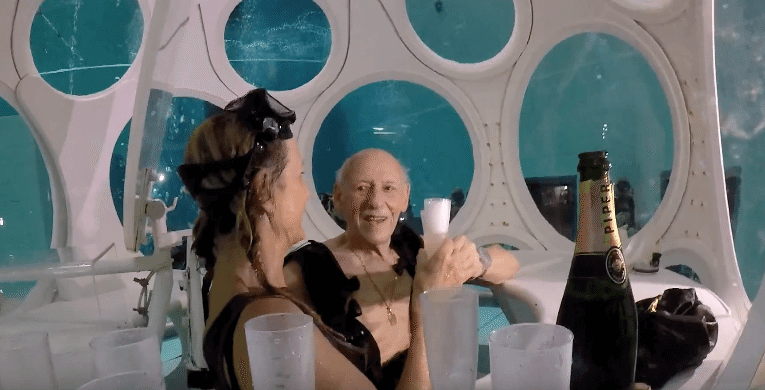

Underwater Diving Bell Restaurant

When was the last time your diving bell was fully stocked with Champagne & Lobster?

The Nemo 33 pool in Belgium is the deepest indoor...